Friends,

On September 25th, 2023 OpenAI claimed ChatGPT could now see, hear and speak.

Microsoft researchers in a recent paper, even try to legitimize the tool. Looks like it will be a must-read for GPT-4V power users 👀, there are a lot of examples:

A 166-page report from Microsoft qualitatively exploring GPT-4V capabilities and usage. Describes visual+text prompting techniques, few-shot learning, reasoning, etc.

But who among you will even read the paper? You want to see the demos. There are plenty of viral ones, hustle bro memes, but are they want they claim? So let’s explore.

GPT-4V even started to trend on Twitter, moments after I finished this article yesterday.

I took the liberty of embedding some of the viral videos about GPT-4V below:

You can decide for yourself if there is any real product-market fit of real business value here. OpenAI has a habit of releasing products in an unfit for consumption state. That’s not to say that GPT-4V won’t evolve into some pretty impressive capabilities.

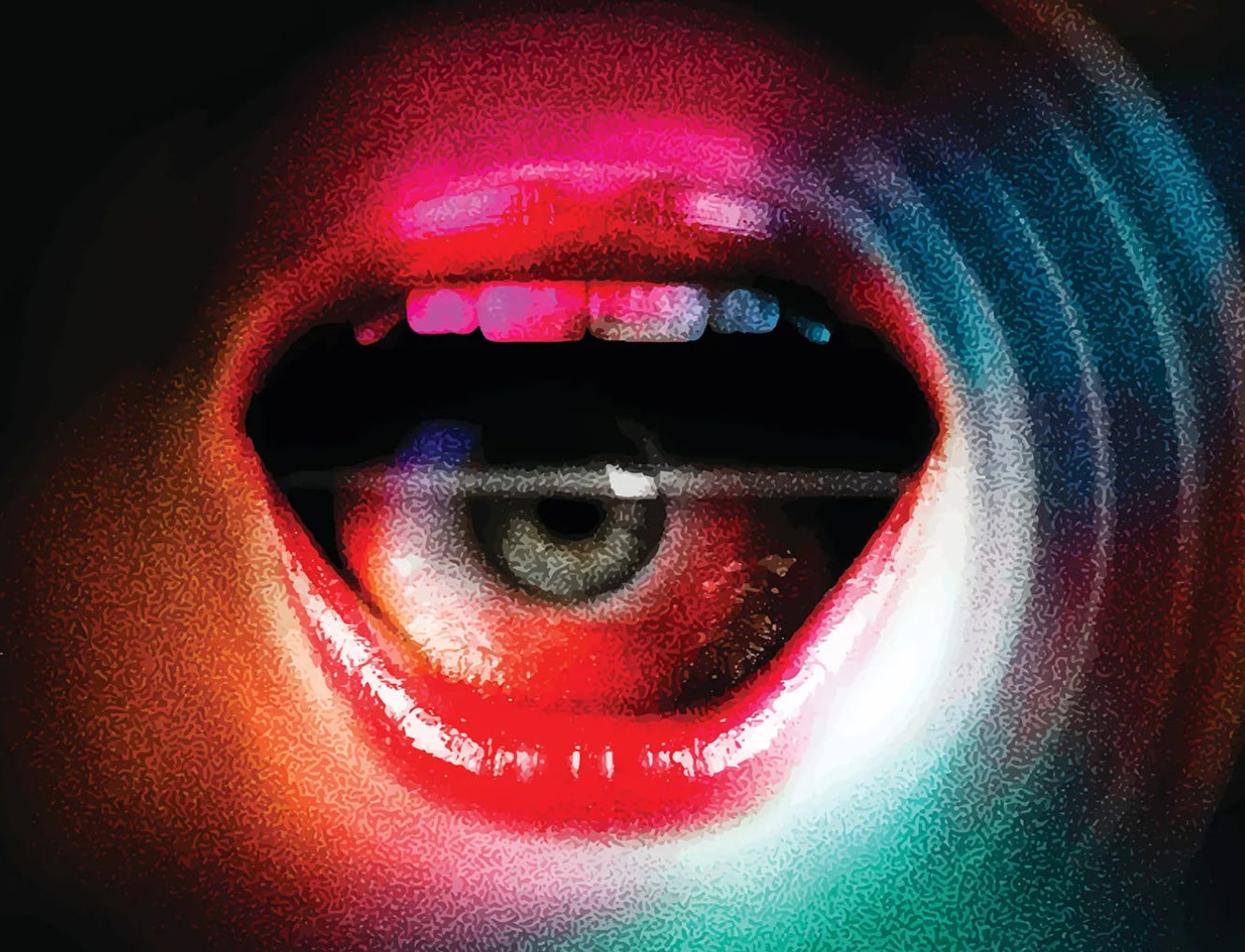

There is more than meets the 👁️ eye here.

Keep reading with a 7-day free trial

Subscribe to AI Supremacy to keep reading this post and get 7 days of free access to the full post archives.